Robust real-time Vision-aided Inertial Navigation on Google GLASS

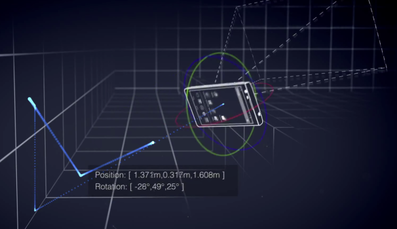

In [C8-C9], we introduced an Iterative Kalman Smoother (IKS) for tracking the 3D motion of a mobile device. In contrast to existing Extended Kalman Filter (EKF)-based approaches, smoothing can better approximate the underlying nonlinear system and measurement models by re-linearizing them, increasing the convergence rate of critical parameters (e.g., IMU-camera clock drift) and improving the positioning accuracy during challenging conditions (e.g., scarcity of visual features). In contrast to existing inverse filters, the proposed IKS’s numerical stability allows for efficient 32-bit implementations on resource-constrained devices, such as cell phones and wearables. We validated the IKS for performing vision-aided inertial navigation on Google Glass, a wearable device with limited sensing and processing, and demonstrated positioning accuracy comparable to that achieved on cell phones. To the best of our knowledge, this work presents the first proof-of-concept real-time 3D indoor localization system on a commercial-grade wearable computer.

In [C8-C9], we introduced an Iterative Kalman Smoother (IKS) for tracking the 3D motion of a mobile device. In contrast to existing Extended Kalman Filter (EKF)-based approaches, smoothing can better approximate the underlying nonlinear system and measurement models by re-linearizing them, increasing the convergence rate of critical parameters (e.g., IMU-camera clock drift) and improving the positioning accuracy during challenging conditions (e.g., scarcity of visual features). In contrast to existing inverse filters, the proposed IKS’s numerical stability allows for efficient 32-bit implementations on resource-constrained devices, such as cell phones and wearables. We validated the IKS for performing vision-aided inertial navigation on Google Glass, a wearable device with limited sensing and processing, and demonstrated positioning accuracy comparable to that achieved on cell phones. To the best of our knowledge, this work presents the first proof-of-concept real-time 3D indoor localization system on a commercial-grade wearable computer.

I actively participated in the UMN's academic partnership with Google ATAP on Project Tango.

Real-time Vision-aided Inertial Navigation on a cellphone

|

|

Autonomous Camera-IMU based localization for a Parrot AR.Drone quad-rotor

|

|

The miniaturization, reduced cost, and increased accuracy of cameras and inertial measurement units (IMU) makes them ideal sensors for determining the 3D position and attitude of vehicles navigating in GPS-denied areas.

In particular, fast and highly dynamic motions can be precisely estimated over short periods of time by fusing rotational velocity and linear acceleration measurements provided by the IMU's gyroscopes and accelerometers, respectively. On the other hand, errors caused due to the integration of the bias and noise in the inertial measurements can be significantly reduced by processing observations to point features detected in camera images in what is known as a vision-aided inertial navigation system (V-INS). In this video, we present the latest demonstration of a V-INS implementation, that has computational complexity linear in the number of features tracked from the camera, enabling real-time implementations of the proposed algorithm. The specific implementation is targeted on enabling quadrotors to navigate with high precision in GPS-denied areas using only a camera and an IMU. |

Real-time implementation of V-INS

|

|

These two videos demonstrate the performance of our real-time implementation of V-INS odometry algorithms as described in [J2, J3, C2, C3].

|

Improving estimator consistency in Vision-aided Inertial Navigation

|

|

This video demonstrates the experimental results of the Observability-Constrained Vision-aided Inertial Navigation System (OC-VINS) presented in:

J. A. Hesch, D. G. Kottas, S. L. Bowman and S. I. Roumeliotis, "Camera-IMU-based Localization: Observability Analysis and Consistency Improvement", which was submitted to the International Journal of Robotics Research. The left panel displays images from the camera used during the experiment. The right panel depicts the 3D position and orientation as the sensing package traversed a ~550 m trajectory through Walter Library at the University of Minnesota. The bottom panel depicts the z-axis estimate (altitude), which shows the package as it changes floors. |

Camera - 3D LIDAR intrinsic and extrinsic calibration

|

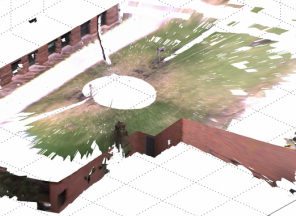

In [J1], [C1], we use methods from Algebraic Geometry so as to perform a high accuracy extrinsic calibration between a high speed 3D LIDAR (Velodyne) and a spherical camera (Ladybug).

A qualitative evidence of the achieved accuracy was demonstrated by producing photo-realistic reconstructions of indoor & outdoor scenes. |